In this article, you’ll complete the following tasks: Create a GitHub Action to implement a build pipeline Modify the coupon service code to trigger the build workflow Monitor the build workflow’s progress in real time, Update a failing unit test to fix the build.

Create the build action

Create a GitHub Action for the build with the following steps:

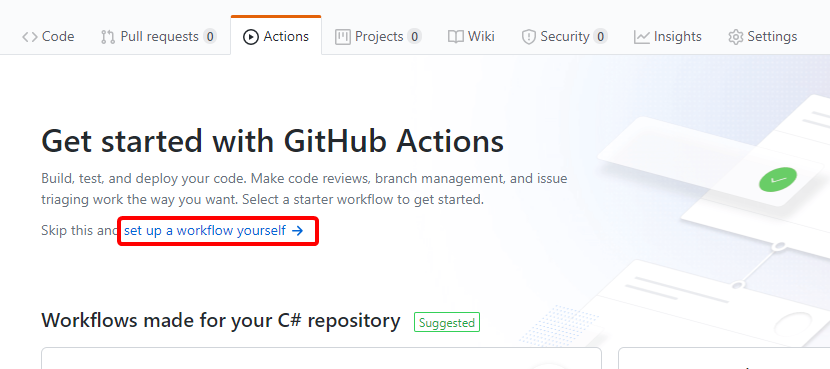

- Select the Actions tab in your repository and select the set up a workflow yourself link:

- Replace the YAML in the editor with the following YAML:

name: eShop build on: push: paths: - 'src/Services/Coupon/**' - 'tests/Services/Coupon/**' branches: [ main ] jobs: build-and-push-docker-image: runs-on: ubuntu-latest steps: - name: Get code from the repository uses: actions/checkout@v1 with: ref: main - name: Run unit tests run: dotnet test tests/Services/Coupon/*.Tests/*.csproj env: DOTNET_CLI_TELEMETRY_OPTOUT: true DOTNET_NOLOGO: true - name: Build and push Docker image uses: docker/build-push-action@v1.1.0 with: username: ${{ secrets.REGISTRY_USERNAME }} password: ${{ secrets.REGISTRY_PASSWORD }} registry: ${{ secrets.REGISTRY_LOGIN_SERVER }} path: . dockerfile: './src/Services/Coupon/Coupon.API/Dockerfile.acr' repository: 'coupon.api' tags: 'linux-latest' push: trueThe preceding YAML defines a GitHub Action that:

- Is triggered when a commit is pushed to the coupon service’s source code or unit tests in the

mainbranch. - Defines step-specific environment variables. For example, the

Run unit testsstep definesDOTNET_CLI_TELEMETRY_OPTOUTandDOTNET_NOLOGO. With regards to the .NET Core CLI, those environment variables opt out of usage data collection and suppress the first-run telemetry message, respectively. - Has one job—a set of steps that execute on the same workflow runner—named

build-and-push-docker-image. The job:- Executes the xUnit tests for the coupon service.

- Builds the Docker image and pushes it to an ACR instance. ACR is a private container registry used for the modified coupon container image. You don’t have permission to modify the Microsoft-owned container registry from which the original image was retrieved.

- Runs in an

ubuntu-latestrunner and has three steps, two of which use actions available from the GitHub Actions marketplace:Get code from the repositoryuses theactions/checkout@v1action to check out themainbranch.Build and push Docker imageuses thedocker/build-push-action@v1.1.0action to build the container image and push it to ACR.

Important

Trigger conditions and other artifacts of GitHub Actions or workflows depend on the apps and environments. For ease of understanding, details are kept simple here. Both the build and the deploy workflows are scoped to coupon service changes because all the microservices are kept under a single repository. In an actual production scenario, each microservice is kept in a separate repository.

- Is triggered when a commit is pushed to the coupon service’s source code or unit tests in the

- Replace the default workflow file name of main.yml with build.yml:

- Select the Start commit button, select the Commit directly to the `main` branch radio button, and select Commit new file to save the workflow file.

Trigger a build

You’ve finished creating the build workflow for your CI/CD pipeline on Cloud Hosting. The Marketing department wants to start a campaign to better track discount code usage. With this feature, Marketing can better understand which discount codes are most effective in boosting sales. To support this feature, make the following changes in the main branch:

- Select the Code tab in your fork of the repository.

- Select the edit icon to open the src/Services/Coupon/Coupon.API/Controllers/CouponController.cs file in the editor:

- Replace the comment

// Add LogInformation callwith the following code:_logger.LogInformation("Applying coupon {CouponCode}", code);The preceding code logs the discount code being applied.

- Select the Commit directly to the `main` branch radio button and select the Commit changes button.The build workflow is triggered automatically.

Monitor the build

View the real-time progress of the build by completing the following steps:

- Select the Actions tab.

- Select the most recent workflow run listed for the eShop build workflow. The commit message used in the previous step becomes the run’s name.

- Select the build-and-push-docker-image task.

- Wait a few minutes. Notice that:

- The build fails on the

Run unit testsstep. - The

Build and push Docker imagestep doesn’t run because the previous step failed.

- The build fails on the

Fix the build

- From the Code tab, edit the tests/Services/Coupon/Coupon.API.Tests/Controllers/CouponControllerTests.cs file. In the CouponControllerTests.cs file, notice that

Assert.True(false);causes the unit test to fail. Replace that line with the following code:Assert.True(true);The preceding code causes the test to always pass. This test is for illustrative purposes only. Real tests should test actual functionality.

- Commit and push this change to the

mainbranch.The build workflow is triggered automatically.

When the build completes successfully, all steps are prefixed with a green check mark. Expand any task for the output generated during its execution. For example:

Notes:

It’s possible to move the dotnet test command to the Dockerfile. In this example, you’re running dotnet test in the GitHub Action to:

- Understand how to execute .NET Core CLI commands in GitHub Actions.

- Understand how the failure of a step can prevent execution of the remaining build steps.

In this unit, you created a GitHub Action to build the coupon service. You added logging to the coupon service and saw how committing that code triggered the build workflow. Next, you fixed a failing unit test and triggered the build again. Finally, you learned how to monitor the build’s progress in real time.