Imagine you’re a software developer for an online retailer named eShopOnContainers. The retailer’s online storefront is a cloud-native, microservices-based ASP.NET Core app. To enhance the team’s agile development practices, you’ll implement a Continuous Integration and Continuous Deployment (CI/CD) pipeline. The pipeline has a series of automated tasks to compile, test, configure, and deploy from the build environment through all environments. You’ve decided to use GitHub Actions to fulfill the requirement. Because each microservice can be deployed independently, you’ve also decided to start with enabling CI/CD for a single service.

This module guides you through the process of implementing a CI/CD pipeline using GitHub Actions. You’ll begin with a simplified, revamped version of eShopOnContainers—the companion reference app for the guide .NET Microservices: Architecture for Containerized .NET Applications. This new reference app version includes a discount coupon feature that can be used at checkout time in the shopping basket. The feature is supported by an ASP.NET Core web API known as the coupon service. CI/CD will be enabled for the coupon service in this module.

You’ll use your own Azure subscription to deploy the resources in this module. To estimate the expected costs for these resources, see the preconfigured Azure Calculator estimate of the resources that you’ll deploy. If you don’t have an Azure subscription, create a free account before you begin.

Set up the environment

In this unit, you’ll use a script to deploy the existing eShopOnContainers app to Azure Kubernetes Service (AKS)

Launch Azure Cloud Shell

- Open the Azure Cloud Shell in your browser.

- Select a directory with access to the Azure subscription in which you want to create resources.

- Select Bash from the environment drop-down in the upper left.

Run the deployment script

- In a new browser window, fork the repository github.com/MicrosoftDocs/mslearn-microservices-devops-aspnet-core to your own GitHub account. For instructions on forking, see Forking Projects.

- Run the following command in the command shell. When prompted for Repo URL, enter the URL of your fork created in the first step.

Bash

. <(wget -q -O - https://aka.ms/microservices-devops-aspnet-core-setup)Tip

You can use the Copy button to copy commands to the clipboard. To paste, right-click on a new line in the Cloud Shell window and select Paste or use the Shift+Insert keyboard shortcut (⌘+V on macOS).

The preceding command retrieves and runs a setup script from a GitHub repository. The script completes the following steps:

- Installs the required version of the .NET Core SDK.

- Clones the eShopOnContainers app from your fork of the GitHub repository.

- Provisions AKS and Azure Container Registry (ACR) resources.

- Launches the Cloud Shell editor to view the code.

- Deploys the containers to AKS.

- Displays connection information upon completion.

Important

The script installs the required version of the .NET Core SDK alongside the version pre-installed in Azure Cloud Shell. To revert to the default configuration in Cloud Shell, see the instructions in the Summary unit.

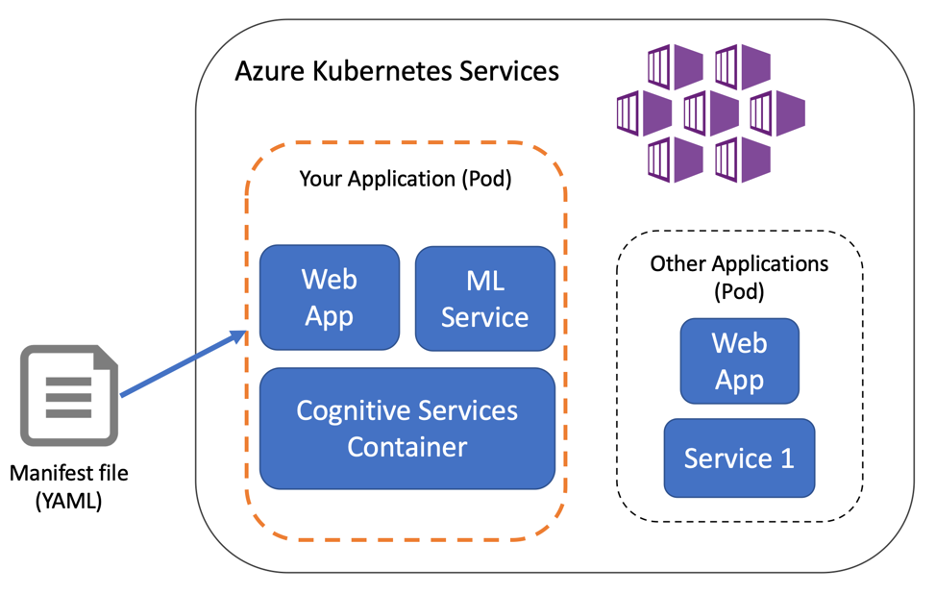

The script takes several minutes to complete. It deploys a modified version of the eShopOnContainers reference app. The solution architecture of the app is pictured in the following diagram:

This module focuses on adding CI/CD for the coupon service depicted in the preceding diagram.

Note: Non-blocking warnings are expected in the deployment process. If an unexpected exception occurs, you can reset any changes made by the script by running the following command:

cd ~ && \

rm -rf ~/clouddrive/aspnet-learn && \

az group delete --name eshop-learn-rg --yes